A link extractor is an object that extracts links from responses.

The

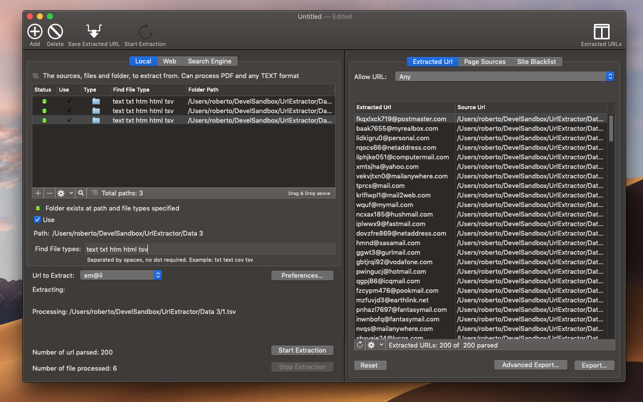

__init__ method ofLxmlLinkExtractor takes settings thatdetermine which links may be extracted. LxmlLinkExtractor.extract_links returns alist of matching Link objects from aResponse object.Dec 10, 2019 It can inspect the contents of partially downloaded file and can also extract original download URL as well. With Ultra File Opener, you can copy original download URLs, resume or restart downloads. With the software you can open and view 500 different file types including images, documents, photos and more. URL Extractor extracts email addresses and URLs from files, the Web and through search engines. You can start from a single website and browse all the links inside looking emails or URL to extract and save all in HD user. You can also extract a single file or the entire contents of a folder on your HD at any nested level. URL Extractor is a standard Cocoa Document based application to extract URLs form your local disk or from the web using a series of user settings and data. A window can be saved on disk as a URL Extractor document with all the settings and data used for the extraction and reopened at later time. Jobertabma / relative-url-extractor. Watch 20 Star 461 Fork 81 A small tool that extracts relative URLs from a file. 461 stars 81 forks Star Watch Code. Right-click the image. Once you've found the image that you want to get the URL for, right-clicking it will prompt a drop-down menu to appear. If you're using a Mac with a one-button mouse, hold Ctrl and click the image to open the right-click menu.

Link extractors are used in

CrawlSpider spidersthrough a set of Rule objects.You can also use link extractors in regular spiders. For example, you can instantiate

LinkExtractor into a classvariable in your spider, and use it from your spider callbacks:Link extractor reference¶

The link extractor class is

scrapy.linkextractors.lxmlhtml.LxmlLinkExtractor. For convenience itcan also be imported as scrapy.linkextractors.LinkExtractor:LxmlLinkExtractor¶

scrapy.linkextractors.lxmlhtml.LxmlLinkExtractor(allow=(), deny=(), allow_domains=(), deny_domains=(), deny_extensions=None, restrict_xpaths=(), restrict_css=(), tags='a', 'area', attrs='href', canonicalize=False, unique=True, process_value=None, strip=True)[source]¶LxmlLinkExtractor is the recommended link extractor with handy filteringoptions. It is implemented using lxml’s robust HTMLParser.

- allow (str or list) – a single regular expression (or list of regular expressions)that the (absolute) urls must match in order to be extracted. If notgiven (or empty), it will match all links.

- deny (str or list) – a single regular expression (or list of regular expressions)that the (absolute) urls must match in order to be excluded (i.e. notextracted). It has precedence over the

allowparameter. If notgiven (or empty) it won’t exclude any links. - allow_domains (str or list) – a single value or a list of string containingdomains which will be considered for extracting the links

- deny_domains (str or list) – a single value or a list of strings containingdomains which won’t be considered for extracting the links

- deny_extensions (list) –a single value or list of strings containingextensions that should be ignored when extracting links.If not given, it will default to

scrapy.linkextractors.IGNORED_EXTENSIONS.Changed in version 2.0:IGNORED_EXTENSIONSnow includes7z,7zip,apk,bz2,cdr,dmg,ico,iso,tar,tar.gz,webm, andxz. - restrict_xpaths (str or list) – is an XPath (or list of XPath’s) which definesregions inside the response where links should be extracted from.If given, only the text selected by those XPath will be scanned forlinks. See examples below.

- restrict_css (str or list) – a CSS selector (or list of selectors) which definesregions inside the response where links should be extracted from.Has the same behaviour as

restrict_xpaths. - restrict_text (str or list) – a single regular expression (or list of regular expressions)that the link’s text must match in order to be extracted. If notgiven (or empty), it will match all links. If a list of regular expressions isgiven, the link will be extracted if it matches at least one.

- tags (str or list) – a tag or a list of tags to consider when extracting links.Defaults to

('a','area'). - attrs (list) – an attribute or list of attributes which should be considered when lookingfor links to extract (only for those tags specified in the

tagsparameter). Defaults to('href',) - canonicalize (bool) – canonicalize each extracted url (usingw3lib.url.canonicalize_url). Defaults to

False.Note that canonicalize_url is meant for duplicate checking;it can change the URL visible at server side, so the response can bedifferent for requests with canonicalized and raw URLs. If you’reusing LinkExtractor to follow links it is more robust tokeep the defaultcanonicalize=False. - unique (bool) – whether duplicate filtering should be applied to extractedlinks.

- process_value (collections.abc.Callable) –a function which receives each value extracted fromthe tag and attributes scanned and can modify the value and return anew one, or return

Noneto ignore the link altogether. If notgiven,process_valuedefaults tolambdax:x.For example, to extract links from this code:You can use the following function inprocess_value: - strip (bool) – whether to strip whitespaces from extracted attributes.According to HTML5 standard, leading and trailing whitespacesmust be stripped from

hrefattributes of<a>,<area>and many other elements,srcattribute of<img>,<iframe>elements, etc., so LinkExtractor strips space chars by default.Setstrip=Falseto turn it off (e.g. if you’re extracting urlsfrom elements or attributes which allow leading/trailing whitespaces).

extract_links(response)[source]¶

Returns a list of

Link objects from thespecified response.Only links that match the settings passed to the

__init__ method ofthe link extractor are returned.Duplicate links are omitted.

Link¶

scrapy.link.Link(url, text=', fragment=', nofollow=False)[source]¶Link objects represent an extracted link by the LinkExtractor.

Url Extractor 4 70 1/2

Using the anchor tag sample below to illustrate the parameters:

- url – the absolute url being linked to in the anchor tag.From the sample, this is

https://example.com/nofollow.html. - text – the text in the anchor tag. From the sample, this is

Dontfollowthisone. - fragment – the part of the url after the hash symbol. From the sample, this is

foo. - nofollow – an indication of the presence or absence of a nofollow value in the

relattributeof the anchor tag.

Cbse class 6 social studies chapter 1. Font Awesome gives you scalable vector icons that can instantly be customized — size, color, drop shadow, and anything that can be done with the power of CSS.

One Font, 675 Icons

In a single collection, Font Awesome is a pictographic language of web-related actions.No JavaScript Required

Fewer compatibility concerns because Font Awesome doesn't require JavaScript.Infinite Scalability

Scalable vector graphics means every icon looks awesome at any size.Free, as in Speech

Font Awesome is completely free for commercial use. Check out the license.CSS Control

Easily style icon color, size, shadow, and anything that's possible with CSS.Perfect on Retina Displays

Font Awesome icons are vectors, which mean they're gorgeous on high-resolution displays.Plays Well with Others

Originally designed for Bootstrap, Font Awesome works great with all frameworks.Desktop Friendly

To use on the desktop or for a complete set of vectors, check out the cheatsheet.Accessibility-minded

Font Awesome loves screen readers andhelps make your icons accessible on the web.Thanks To

Thanks to @robmadole and @supercodepoet for icon design review, advice, some Jekyll help, and being all around badass coders.

HUGE thanks to @gtagliala for doing such a fantastic job managing pull requests and issues on the Font Awesome GitHub project.

Url Extractor 4 700

Thanks to MaxCDN for providing the excellent BootstrapCDN, the fastest and easiest way to get started with Font Awesome.